Billy Milam is CEO of Consulting Solutions, a nationally recognized leader in technology workforce and consulting services with key practice areas in Digital Transformation, ERP, Cloud and Data, Cybersecurity, AI and Advanced Analytics, and Energy and Engineering. The company’s scalable engagement models — from individual technology consultants to strategic enterprise programs — enable clients to tap into world-class talent, expertise, and services to drive technology and enterprise transformation initiatives.

Here, Milam shares his perspective on how AI and cybersecurity are reshaping workforce strategies — and what organizations must do to keep pace.

M.R. Rangaswami: AI is changing how work gets done across nearly every function. What impact are you seeing on the technology workforce specifically?

Billy Milam: AI is accelerating a shift that was already underway — from task-based technology roles to outcome-based roles. We’re seeing demand move away from purely execution-focused positions and toward professionals who can interpret, guide, and operationalize AI-driven insights. That means stronger emphasis on critical thinking, domain expertise, and the ability to work alongside intelligent systems rather than compete with them.

At the same time, AI is compressing timelines. Projects that once took months are now expected in weeks, which is putting pressure on teams to be more adaptable. Organizations are responding by rethinking team structures — blending traditional engineering roles with data, security, and AI specialists — and placing a premium on talent that can bridge those disciplines. The workforce isn’t shrinking, but it is becoming more specialized and more fluid.

M.R. Rangaswami: Cybersecurity threats continue to evolve alongside AI. How is this changing the types of talent organizations need?

Billy Milam: Cybersecurity is no longer a standalone function — it’s embedded in everything. As AI introduces new efficiencies, it also expands the attack surface, which means organizations need security-minded professionals across the entire tech stack, not just in dedicated security roles.

What’s also changed is the need for proactive versus reactive skill sets. It’s less about responding to incidents and more about anticipating vulnerabilities, especially as AI systems become part of core operations. That requires talent who understand both the technical side — like threat detection and architecture — and the business implications of risk. We’re also seeing increased demand for professionals who can translate security into business language. Boards and executive teams are more engaged than ever, so the ability to communicate risk, compliance, and resilience in a strategic context has become just as important as technical expertise.

M.R. Rangaswami: With shifts like these, what should companies be doing to future-proof their workforce?

Billy Milam: The biggest mistake companies can make is treating this as a hiring problem alone, when it’s really a workforce strategy issue. Upskilling existing employees is just as critical as bringing in new talent, especially in areas like AI literacy and security awareness, which need to extend beyond technical teams. Organizations should also be thinking in terms of adaptability. The pace of change means job descriptions written today may be outdated in a year. Building a culture that supports continuous learning — and giving teams exposure to cross-functional experiences — helps create a more resilient workforce.

Finally, there’s a growing need for partnership. Whether through consulting firms, training programs, or ecosystem collaborations, companies don’t have to solve this alone. The best approach is to combine internal development with external expertise to stay aligned with where technology — as well as risk — is heading.

M.R. Rangaswami is the Co-Founder of Sandhill.com

Timothy State is the founder and CEO of Altius, where he focuses on helping organizations improve performance through better health and well-being.

He has spent more than two decades working in and around workplace health, leadership, and organizational effectiveness. His work often sits at the intersection of business strategy and employee well-being, with a focus on practical outcomes rather than theory alone. He has contributed to broader industry conversations through organizations like the Business Group on Health and the American Heart Association.

In this conversation, we get a clear sense of Timothy’s outlook on how companies need to move beyond traditional wellness programs and toward more continuous, personalized support systems that can scale across organizations.

M.R. Rangaswami: Organizations have spent billions on employee wellbeing programs over the past decade, yet disengagement and burnout remain widespread. What fundamental assumption about workplace wellbeing do you believe companies have been getting wrong?

Timothy State: The disengagement and burnout epidemic has several root causes. Despite many positive efforts out there, a common mistake has been approaching workforce wellbeing as a program instead of a core strategic asset. It is critical to understand it as something concrete and predictable that can be produced and improved via a system of inputs we can directly guide.

Like any other vital, competitive business resource it requires effective, real-time measurement, influence at-scale, and predictive intelligence to remain proactive with interventions.

Isolated point solutions like mental health apps, fitness challenges and surveys, for example, may have their individual value, but these efforts rarely come together to see or address the wider, upstream drivers of performance. They tend to be parts of a fragmented system that doesn’t engage employees at scale and leaves leaders uncertain about what actually improves outcomes. That also makes for a hazy ROI picture on what drives real value.

At Altius, we see elevating the quality of the “human system” as being foundational to high, sustained organizational performance. The collective evidence and our own experience is clear on this. Declines in human life quality in the workforce reduce productivity, engagement, and retention, while driving population health costs and a range of other negative effects on consumer experience.

Yet most organizations still measure workforce wellbeing episodically (if at all) using surveys or narrow program participation metrics, seeing lagging glimpses rather than continuous and predictive signal of their workforce’s holistic condition.

The needed shift is moving from an isolated programs-view to constant upstream visibility into the human system. Via technology, real-time signal on human life quality at scale enables predictive guidance to individuals and proactive decisions for organizations. This transforms human wellbeing from a cost center into a driver of measurable business performance.

M.R.: Most HR technologies today measure engagement through surveys or episodic check-ins. You’ve argued that continuous conversational data changes how organizations understand the “human system.” Why is this shift so significant?

Timothy: Many organizations rely heavily on legacy tools such as annual and pulse surveys which capture static snapshots of sentiment. These miss the dynamic nature of work and life, and by the time data is analyzed, the relevant moment has often passed.

Embedding private, conversational AI that is really good at helping people improve their life quality also enables organizations to shift from episodic measurement to continuous understanding. Natural coaching interactions with an AI Life Quality companion like ours – Alti™ – generate richer insights and influence into personal wellbeing, engagement, and performance – especially when combined with other contextual data. This enables proactive, hyper-personalized guidance to individuals, and generates real-time patterns and predictive intelligence at a population level to drive smart employer decisions and interventions.

This shift is significant because it makes the actual “human system” much more discoverable. Continuous insights, rooted in a scientific model for human life quality, help organizations clearly identify risk signals early, take more intelligent actions, and understand how wellbeing, engagement, and productivity interact. It moves the focus from reactive surveys to proactive human optimization.

M.R.: As AI transforms the workplace, many leaders are focused on automation and efficiency. You often talk about AI as a tool to elevate human potential instead. How do you see AI reshaping the relationship between people, performance, and organizations over the next decade?

Tim: The next decade will seem like several decades packed into one, relative to disruptive change rate and opportunity. There are extreme or negative possible scenarios that get written about a lot. However, there is a positive, pro-human and pro-growth version of the future we need to clearly envision now and build toward.

Current AI discussions focus heavily on task automation and replacement, process efficiency or human labor reduction. Leaders have a responsibility to consider all these. However, true transformation lies in using AI to elevate human potential, unlock the increased value employees can bring, and achieve much better understanding and influence over how human-generated value reaches the end consumer. How we think, contribute, collaborate and thrive in work is going to change massively. Our opportunity is to apply wisdom and agency to strengthen the human system and build long-term competitive value.

1. Personalized Intelligence: From Tool to Cognitive Partner

Over the next decade, AI will function as hyper-personalized intelligence – a continuous, context-aware partner that helps us navigate both work and life more effectively. The point won’t be to outsource our thinking, but to help us think and act better, more clearly, and with greater order.

For employees, AI becomes a force multiplier: real-time guidance, improved access to resources, collaboration and personalized support that meets people where they are. For leaders, this is profoundly de-burdening. The relationship between manager and team member shifts from periodic check-ins to continuous, intelligent enablement.

We move from performance measurement to performance amplification.

2. The Human System: From Hierarchy to Organizational Intelligence

The second shift is structural. AI collapses coordination constraints, simplifying hierarchies while improving decision speed and quality. Managers are freed to focus on judgment, mentorship, and developing people.

At the same time, AI makes the human system more visible and connected, revealing how wellbeing, engagement, and productivity interact as one system.

3. Human Potential as a Compounding Asset

This is the most consequential shift. Human capital begins to appreciate rather than depreciate when people are supported with tools that accelerate learning, health, and contribution.

AI also shortens the feedback loop between employee actions and customer value, allowing organizations to innovate faster and more precisely.

Leading in this era means building environments where people become healthier, more capable, and more impactful – and where human-generated value is actively cultivated.

M.R. Rangaswami is the Co-Founder of Sandhill.com

Leader in hybrid, full-stack infrastructure observability, Paul has career expertise in scaling high-growth businesses fuels Virtana’s mission to advance observability for AI-powered and hybrid IT environments, establishing the company as a trusted partner to Global 2000 enterprises.

A seasoned technology executive, Paul’s track record of building high-performing teams and driving profitable growth across AI factories, IT infrastructure, cloud platforms, and enterprise applications at some of the industry’s most respected companies.

Prior to joining Virtana, Paul held leadership roles at Elastic, SAP, Salesforce, and BMC, where he led customer-focused teams and shaped successful growth strategies.

On a personal note, Paul splits his time between San Francisco and New York, where he enjoys spending time with his two college-aged children. He serves on the board of the John Maclean Foundation and is an avid surfer, skier, and cyclist.

M.R. Rangaswami: For readers who may not be familiar with Virtana, can you give us a quick picture of what the company does and the market you operate in?

The world’s largest banks, telcos, healthcare systems and airlines run on extraordinarily complex digital infrastructure with hundreds of interdependent services, networks, databases and compute environments all operating simultaneously. When those systems degrade or fail, they result in more than just technical incidents. The impacts are business-critical events that halt revenue, disrupt customer experience and put brand reputation at risk.

Virtana operates in the observability market, a category of software that monitors, analyzes and helps organizations govern their entire technology environment. However, observability is undergoing a fundamental shift. Legacy approaches focused on monitoring discrete components in isolation: the network, the application and the storage layer. That model was built for a simpler era and it is no longer sufficient. AI has turned this evolution into an operational mandate, as system-level understanding is now required to achieve autonomous IT operations and control performance, cost and risk.

Virtana delivers end-to-end AI-powered observability across the full system, including AI infrastructure. We collect approximately 20,000 metrics in subsecond time, use AI agents to discover system components and map their dependencies and identify the root cause of performance degradation or failure in hours rather than days. Our platform helps organizations reduce mean time to resolution, eliminate infrastructure waste and operate their most critical services with the visibility and control that modern enterprise demands.

The Global 2000 relies on us for operational excellence and what determines enterprise value at scale.

M.R.: “AI factories” are the next evolution of enterprise infrastructure, where AI workloads move from experimentation to full-scale production. Given that roughly a quarter of AI jobs fail today, what fundamentally breaks when organizations try to industrialize AI and what distinguishes the companies that are successfully making that transition?

Paul: The shift from AI experimentation to industrialization is where most organizations encounter their first serious operational reckoning. For the past several years, enterprises have been running AI in controlled and relatively forgiving environments: cloud sandboxes, pilot programs and proof-of-concept deployments. Those environments masked a problem that scale now exposes. The infrastructure governance required to run AI in production is categorically different from anything most organizations have built before.

An AI factory is not simply a cluster of GPUs. It is a highly complex interdependent system spanning compute, networking, storage, orchestration layers and the workloads themselves, all operating under continuous load with real business outcomes riding on its performance. When 25% of AI jobs fail, the cause isn’t the model. It’s the lack of end-to-end system visibility. Organizations cannot identify where jobs are stalling, which dependencies are creating bottlenecks or whether the infrastructure is operating efficiently because they are still relying on legacy monitoring tools designed to watch discrete components rather than govern interconnected systems. AI doesn’t break at scale because models fail. It breaks because the system running them cannot see or manage its own constraints.

The companies making the transition successfully share a common discipline where they treat the AI factory as infrastructure. They have invested in observability that captures telemetry across the entire system, uses AI agents to identify risk and causality in real time and provides the controls needed to ensure that AI workloads are available, performant and efficient. They have also closed the disconnect we consistently see in our research, where executives believe their organizations are AI-ready while their IT leadership recognizes significant gaps in governance and operational readiness.

M.R.: CIOs are under pressure to simultaneously deliver more powerful AI capabilities while reducing operational costs. In an AI factory model, where are the biggest hidden inefficiencies today, whether in GPU utilization, energy consumption or orchestration, and how should leaders rethink ROI when the cost of under-optimized infrastructure is so high?

Paul: The tension CIOs are facing is real. They are being asked to scale AI capabilities rapidly while reducing operational costs. That looks contradictory, but it isn’t if the system is properly understood end to end.

The biggest inefficiencies in AI factories today are not where most leaders are looking. GPU utilization is the most visible example, but it is often misunderstood. These are expensive assets, yet in many environments they are idle or fragmented because workloads are not orchestrated at the system level. The issue is not procurement. It is the lack of visibility into how GPUs are actually being used relative to the workloads they support.

Energy consumption is a related challenge. Underutilized or poorly scheduled workloads drive unnecessary power, cooling and data movement costs across the entire environment. This is not just a sustainability issue. It is a direct consequence of system inefficiency. Where leaders need to rethink ROI is in how they measure value. It is no longer about how much is spent on infrastructure or even how busy it is. It is about what the system is producing.

Metrics like token throughput, cost per inference, job completion rates and workload efficiency are far more meaningful indicators of return. Without connecting those outcomes to the underlying infrastructure and system behavior, inefficiencies remain hidden and costs continue to scale. The organizations that get this right are the ones that move beyond monitoring individual components and instead understand how the entire system operates.

That is what allows them to reduce cost and scale AI at the same time.

Kamesh Pemmaraju is the Founder and Managing Partner of OptimaGTM, a GTM systems consulting firm, focused on a specific and urgent problem: B2B and AI startups unable to reach predictable growth/scale after seeing some initial market traction.

In our conversation, Kamesh shares his diagnosis of why early traction stops working, why the instinct to add headcount or tools rarely solves the real problem, and what it actually takes to achieve repeatable growth in an investing environment that has shifted decisively toward GTM efficiency over growth at all costs.

M.R. Rangaswami: What are you seeing break down for B2B and AI founders that are scaling to achieve repeatable growth, and what actually causes it?

Kamesh Pemmaraju: The pattern is almost identical across companies. They’ve hit $1M or $2M on founder-led sales, strong early relationships, genuine problem-market fit (initial validation of opportunity and demand). The instinct that got them there was right. Then the same instinct becomes the ceiling.

What breaks when it comes to scaling the business is that their Go-to-Market was never designed as a cohesive end-to-end system that connects their product decisions with sales, marketing, and customer success execution.

When functional leaders optimize locally without a shared system connecting them, decisions start to conflict instead of compound. Marketing generates activity that sales can’t convert. Product ships features that customers don’t adopt. The founder, who used to be the thread holding everything together, becomes the bottleneck instead.

By the time we’re brought in, the typical reaction has already been tried — another analytics tool, a new hire, a strategy offsite. None of it works because those are function-level responses to a system-level problem. You can’t fix a structural gap one department at a time.

M.R.: The B2B buying environment has changed significantly. How does that change what founders actually need to do differently?

Kamesh: Three things have shifted simultaneously, and most founders are only seeing one of them.

The first is structural. Buyers now spend only 17% of their time meeting with vendors. The rest happens independently — through peer forums, analyst reports, and AI-powered search — before a founder ever gets in the room. 43% of buyers make defensive purchase decisions 70% of the time. They’re not asking “is this the best product?” They’re asking “can I defend this choice if it goes wrong?” SQL-to-close rates have dropped 5-6 percentage points year over year as a result. The issue isn’t lead generation. It’s that founders are running persuasion playbooks against buyers who need validation.

The second is specific to AI products, and it’s the one most founders aren’t prepared for. Buyers are using AI themselves and are starting to deploy agents extensively. That changes the conversation in the room completely. They’ve moved past being impressed by “AI-powered.” They know enough about how these systems work to be genuinely skeptical of vendor claims. They want to know precisely where AI sits in the workflow and what it actually does. And the objection that didn’t exist three years ago is now one of the first ones you hear: “why can’t we just build this ourselves?”

The third is that novelty is gone. We’re in what ICONIQ calls the Execution Era. Buyers have moved from curiosity to ROI scrutiny. The demo doesn’t close the deal. Customer adoption data does. Case studies from companies in their exact vertical do. Being cited in an AI search when their procurement team researches the category does — because that signals market legitimacy without a vendor saying so.

When all three converge, the old playbook doesn’t just underperform. It actively works against you. The GTM system has to be redesigned around validation, not persuasion. That’s a structural change, not a messaging tweak.

M.R.: What does predictable revenue actually look like at this stage, and what separates the companies that get there from the ones that don’t?

Kamesh: Predictable revenue is not a forecast that happens to be accurate. It’s an output of a system that was designed to produce a specific result.

The shift starts with one decision: stop treating GTM as a series of campaigns and start treating it as a product with a lifecycle. Every great product goes through the same stages — defined, built, launched, measured, iterated, and expanded. GTM works exactly the same way, but most companies skip the first three steps entirely. They launch motion before they’ve defined who they serve, why they win, and how their product creates measurable value. Then they wonder why conversion doesn’t improve no matter how hard the team works.

Following the lifecycle means sequencing correctly. Define your ICP and positioning before building any motion. Design the full system — from demand generation through sales through customer expansion — before running it. Launch with enablement in place, not after. Measure at the system level: pipeline quality, win rates, customer time-to-value, CAC payback. Run a quarterly review to diagnose drift before it becomes expensive. And design explicitly for expansion — NRR and upsell don’t happen by accident, they have to be built into the system from the start.

The companies that get this right understand one thing: acquisition feeds retention, retention feeds expansion, and expansion feeds the next acquisition cycle. When those stages are connected and measured together, revenue becomes forecastable. When they’re treated as separate campaigns owned by separate functions, you get motion without momentum.

The founders who make it to $20M are not the ones who worked harder. They’re the ones who built the system first — and then ran it.

M.R. Rangaswami is the Co-Founder of Sandhill.com

Jim LaRoe is Symphion’s dynamic CEO with a special combination of skills, experience and insight that has driven Symphion’s success since inception to the world’s leader in print fleet cyber security. Symphion’s focus has been on continual innovation, seamless delivery, affordability of its solutions and a dedication to excellence in customer service.

In today’s interview, Jim discusses the hidden risks in print fleets and why they matter for enterprise security. If an insider intended to inflict harm on a business, the first stop would be the business’ printers. According to Jim, they are the softest targets of all and are jackpots for bad actors and it only takes one printer to bring down an entire business with a front-page news event or quietly stealing valuable data or just plain sabotage. It starts with the fact that printers receive, transmit, process and store the business’ and its customers’ most sensitive data.

M.R. Rangaswami: Why are print fleets still a risk in 2026, and why are they often overlooked?

Jim LaRoe: “Every criminal loves winning lottery numbers. For printer endpoints (any device that creates an image, electronic or otherwise), those numbers are 20-99-1-360-67.” Allow me to explain why.

– 20: They account for 20% of known network endpoints.

– 99: Ninety-nine percent of them are completely unprotected, managed at open factory defaults, including admin passwords published on the internet.

– 1: It only takes one unprotected printer to take down an entire business.

– 360: The threat landscape is 360 degrees, ranging from exposed to the internet with devices “phoning home” to the OEM to stored adjacent system admin passwords to physical USB port walk-up access.

– 67: One leading analyst surveyed businesses, and 67% of the 2,000 surveyed reported a printer-related security event in the past year.

For a long time, the ethical hackers have talked about how easy printers are to exploit. Now you add in AI-enabled hacks where the AI already knows each exploit and admin password. It’s like pouring jet fuel on a raging fire of risk. They’re overlooked because of the 5 O’s.

- Origin: They started as analog devices and are still viewed as business equipment procured and managed by supply chain instead of the complex computing devices that they are.

- Owner: There is no owner of printer endpoint protection, no budget, no enforcement. This is our biggest lift–communicating with prospects.

- Organization: The organization is not there. They have not decided to protect these forgotten network endpoints, there are no audits beyond minimal external pen tests and even if there is a control set, there is no enforcement. Most don’t even have an accurate inventory.

- IOT-Printers are Internet of Things devices. There has been no common management platform for security. Each brand, model and firmware version is different with different configurability and that can change with an update.

- Opportunity: Printers are not career advancing technology for anybody including the leaders that would ask for a budget to protect them. They are perceived graveyard endpoints. There is no available talent pool beyond toner and break fix skill sets even when protection is chosen. Also, everybody thinks that they’re digitized, like in healthcare with EMR. Nothing could be further from the truth, with printers supporting core business functions in businesses like patient care delivery.

M.R.: Where do organizations most often fall short when it comes to securing print fleets that are otherwise considered “managed”?

Jim: Many fleets are “managed”; they’re just managed for print service delivery costs, such as toner supplies, break-fix, and new devices. They confuse managed for cost with managed for protection.

While the features to harden printers are built in and there are well-established standards for securing them, those capabilities are rarely applied in practice. That’s problematic because printers receive, transmit, process, and store some of the most protected and sensitive information in the enterprise, have trusted access to systems like LDAP, email, and file servers, and yet are largely unmonitored.

The multi-billion-dollar, very mature “managed print services” industry competes in a highly commoditized, cost-driven market largely run by supply chains. At best, providers take a “set and forget” approach, configuring devices at installation and never revisiting protections. Most fleets are left at factory defaults for ease of operation. Nobody is auditing. Even when information security mandates controls, they often stall in an RFP and are never fully implemented or validated. Meanwhile, OEMs tout security features that ultimately go unused. The outcome is predictable: printers remain dark, over trusted, and under-protected, even in organizations with otherwise strong security programs.

That’s the gap we focus on closing.

M.R.: What is the practical first step organizations should take to reduce this risk?

Jim: It’s organizational. Assign an owner. Allocate a budget. Design a protection program that fits your budget, your business, your regulatory mandates, and get rolling. The printers aren’t going away, and the stakes continue to get higher. We’re on a mission to raise awareness of this issue and what can be done to address it. I do 45-minute consults every day with businesses owning large print fleets. We discuss their goals, constraints, the options, approximate

M.R. Rangaswami is the Co-Founder of Sandhill.com

Our friends at Allied Advisers have published this report to explore why the “SaaSpocalypse” narrative may be overstated and how SaaS and AI can coexist to create value for vendors, customers, and investors.

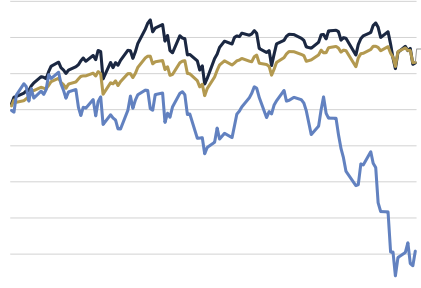

In summary, the SaaS industry is undergoing its most significant transformation in a decade. In early February 2026, over $285 billion in software market value was wiped out. The launch of Claude Cowork intensified concerns that agentic AI could undermine traditional SaaS models. Skepticism around SaaS economics and customer value had already been building, but AI amplified the “SaaSpocalypse” narrative and accelerated a structural shift beyond market volatility, reshaping buyer expectations from access to outcomes.

The key question: Is AI truly displacing SaaS? Pricing is evolving, moats weakening, and software build costs falling. Yet the story is more complex than headlines suggest. The structural strengths and resilient business models of SaaS companies point to a new phase of evolution rather than collapse. The future is not AI versus SaaS, but their convergence.

Our friends at Software Equity Group have pulled together their Annual SaaS Report, and as always, their tone is positive, their outlook is assertive and we appreciate all the insights they have to offer.

Here are five highlights from their report:

1.Aggregate software M&A activity reached a historic peak in 2025

with 4,629 transactions announced, representing 26.4% YoY growth and surpassing the prior 2022 peak by roughly 1,000 deals. This surge reflects not only improving buyer confidence and more stable financing conditions, but also structural shifts reshaping the software market. Lower barriers to software creation and rapid AI adoption have expanded both company formation and acquisition activity, broadening the M&A universe and sustaining elevated annual deal volumes.

2. SaaS M&A activity also reached its highest level on record in 2025

With 2,698 deals, supported by strong and consistent quarterly volume throughout the year. SaaS transactions accounted for approximately 58% of total software M&A, consistent with recent years and near alltime highs. While SaaS’s share of total activity has remained stable, absolute deal volume continues to rise as overall software and AI-related M&A expands.

3. A+ SaaS businesses continued to command outlier valuations in 2025

Mission-critical platforms, high retention, strong ARR growth, and Rule of 40 or higher, with several notable transactions completed at double-digit revenue multiples. These included ServiceNow’s acquisitions of Veza Technologies (40.0x) and Armis (22.8x), Palo Alto Networks’ acquisitions of CyberArk (22.0x) and Chronosphere (20.9x), and Veeam Software’s acquisition of Securiti AI (23.0x). Together, these deals reflect intense competition among well-capitalized buyers for scarce, strategically critical assets.

4. SaaS M&A valuations expanded for the second consecutive year

With the average EV/TTM revenue multiple rising to 6.9x while median multiples remained tightly clustered around ~4.0x. This widening gap highlights an increasingly barbell-shaped market, with deal activity concentrated at both premium, double-digit multiples and lower single-digit outcomes. Valuation expansion has been driven by easing interest rates, strong operating performance, and heightened competition from investors deploying significant dry powder vying for scarce assets.

5. Buyers pursuing strategic angles accounted for approximately 92% of all deals

Regardless of buyer-backing, buyers pursuing strategic angles accounted for approximately 92% of all deals underscoring that strategic rationale is driving M&A activity. Additionally, private equity & venture capital remained highly active, participating in roughly 58% of SaaS M&A deals through direct investments & portfolio add-ons.

Many high-quality public SaaS companies have become acquisition targets as public valuations lag improving fundamentals. This dynamic fueled a sharp rise in take-private activity, including multiple large transactions led by Thoma Bravo, TPG, and Centerbridge, alongside strategic acquisitions by Adobe and IBM.

Importantly, this remains a bullish signal, reflecting strong conviction that public SaaS assets are trading below intrinsic value

To download the full SEG report, click here.

Our friends at Allied Advisers are pleased to present this report on M&A activity in the AI sector in 2025. AI deal activity accelerated sharply in 2025, with total deal value reaching ~$61B, almost double 2024 levels. This growth is fueled by fast-moving GenAI/LLM innovations, the race to build AI infrastructure, increasing pressure on enterprises to deliver tangible AI results, and a shortage of specialized AI talent.

This surge raises a key question: where is deal activity concentrated most in the AI space? In 2025, M&A activity is primarily clustering around deployment-ready, scalable verticals – AI infrastructure, AI applications, and agentic AI. Big Tech buyers increasingly prefer a “buy over build” approach, securing scarce capabilities, talent and speeding time to market, while traditional enterprise software companies are adopting a hybrid strategy of acquiring proven AI businesses while continuing in-house development, using targeted M&A to integrate critical AI building blocks and accelerate product roadmaps.

Value creation is strongest where AI delivers measurable, near-term ROI, making coding and customer support (CX) the key valuation hotspots. These functions account for a large share of AI investment and command strong valuations, making category leaders appealing acquisition targets. Investors are also actively investing in niche AI categories, including LegalTech, humanoid robotics, neocloud, and frontier model platforms, where rapid scaling and rising valuations are expanding opportunities for both strategic M&A and selective IPO pathways.

Looking ahead, AI dealmaking is expected to remain centered on scalable, deployment-ready capabilities, where speed to execution and enterprise impact continue to drive strategic value.

See the full Allied Adviser’s report, here.

Sadagopan Singam is a global business and technology leader with more than three decades of experience building, scaling, and governing large enterprise platforms and digital businesses.

He currently serves as Executive Vice President at HCLTech, where he leads the global

SaaS, Enterprise Platforms, and Edge Services portfolio with full P&L responsibility across

CRM, HCM, Supply Chain, Commerce, digital process orchestration and management, etc. and

that includes Agentic AI–driven transformations therein.

Across his career, Sadagopan has pioneered multiple industry-leading practices, driven

sustained growth across global businesses, and helped Global 2000 enterprises redesign

operating models at scale. His work spans North America, Europe, Asia Pacific, Latin America,

and emerging markets, where he has launched new regions, built global centers of excellence,

and advised boards and executive teams on technology-led growth.

A recognized industry leader, he maintains deep relationships across the enterprise-software

ecosystem, leading LLM model providers, and analyst communities, and serves on multiple

technology advisory boards. He serves on the Forbes Technology Council and, over the past

decade, has participated in several World Economic Forum working groups focused on digital

technologies.

In his book, Agentic Advantage, he draws on his experience leading high-performance teams and

managing multi-million-dollar revenue streams to offer leaders a definitive playbook for building

the autonomous enterprise. This book reflects his conviction that autonomy must be

intentionally designed, governed, and measured — not merely deployed.

M.R. Rangaswami: We have spent the last two years talking about Generative AI productivity, but your book focuses on “Agentic Advantage.” Why is this distinction the single most important pivot for a CEO to make in 2026?

Sadagopan Singam: The industry is currently moving past “pilot fatigue”. Most organizations have used GenAI as a “voice” to summarize or draft content, which provides incremental efficiency but doesn’t fundamentally change the P&L. In Agentic Advantage, I argue that the real breakthrough occurs when AI moves from conversation to autonomous action.

If GenAI is the voice, Agentic AI is the “hands” of the enterprise. We are transitioning to a world where autonomous agents orchestrate entire value chains—from real-time supply chain adjustments to automated financial reconciliation. For a CEO, this is the difference between minor time savings and achieving the 20%+ revenue growth and margin improvements we are seeing in market leaders today.

M.R.: You manage multiple hundred millions plus global portfolio across the world’s largest enterprise platforms. What is the “Silent Risk” that boards are missing when they scale these autonomous systems?

Sadagopan: The silent risk is the “Orchestration Gap”. Most enterprises are building AI in functional silos, but when autonomous agents operate within your SAP, Salesforce, and ServiceNow ecosystems simultaneously, they eventually collide. Without a unified “Agentic Governance” framework, you risk a digital “butterfly effect,” where an automated decision in the supply chain creates a catastrophic conflict in your CRM or HCM systems.

My book provides the blueprint for “Strategic Excellence”. Working with several marque customers, and with highly innovative entetprise platforms focussed on digital innovation, I’ve seen that getting it right requires agents that are not just autonomous, but fully aligned with your global P&L goals and compliance standards.

M.R.: You’ve lived and worked across three continents and led teams that achieved global leader recognition. How does “Agentic Advantage” change the way we think about global talent and the future of high-performance teams?

Sadagopan: We are entering the age of the “Hybrid Workforce,” but the metric for success is shifting. High performance will no longer be measured by the size of your team, but by “Agentic Density”—the ratio of human strategic oversight to autonomous execution.

As a global citizen who has built high-performance teams across North America, Europe, and APAC, I’ve seen that the most successful leaders of the future won’t be those who simply deploy AI, but those who orchestrate it. Agentic Advantage is about shifting the human role from “doing” to “directing”. We are building culturally diverse teams where humans focus on strategic “why” and “should,” while the agentic layer handles the “how” and “when” at an enterprise scale.

Buy Sadagopan’s title here: AdvantageWithGenAI.com.

M.R. Rangaswami is the Co-Founder of Sandhill.com

Piet Buyck is the Senior Vice President and Solution Principal at Logility, an Aptean company. A global technology executive with over 30 years of experience in supply chain management, Buyck has spent his career disrupting analog practices to address the messy reality of operations.

His work focuses on making artificial intelligence accessible and explainable, ensuring it keeps human decision-makers firmly in control.

In this interview, we discuss his new book, The AI Compass for Supply Chain Leaders, where Buyck outlines a framework for rethinking how we plan, align, and communicate across systems to navigate uncertainty.

M.R. Rangaswami: What is the most critical shift supply chain leaders need to make today?

Piet Buyck: Supply chain leaders are operating with better resources than ever before. Their teams are highly educated and well-trained, and their technology has never been more capable. Bringing these two together is the most critical shift that supply chain leaders need to make today.

Specifically, they need to move away from static, number-based planning tools like spreadsheets and reports toward a language of planning that uses natural language interaction and real-time collaboration to anticipate supply chain challenges and convert supply chain uncertainty into opportunities for competitive differentiation.

M.R.: Explain the difference between anti-fragility and resilience. How can leaders use AI to turn volatility into a competitive advantage?

Piet: Modern supply chains are extremely complicated. Even modest changes can cause a bullwhip effect upstream. Whether it’s a global pandemic, a blocked canal, or a regional conflict, the industry has worked hard to become resilient and withstand these disruptions.

Supply chain leaders understand this problem, and the industry has worked very hard to become more resilient and withstand disruptions across many fronts.

Now, we need to go a step further.

Anti-fragility is a state where a supply chain withstands volatility and even benefits from it by assessing and capitalizing on change. AI allows teams to validate new assumptions against real-time events and remake plans in hours.

M.R.: There is often fear that AI will replace jobs. How should organizations view the relationship between AI and human planners to maximize results?

Piet: Rather than displacing people, AI moves them to the center of machine-assisted processes. As supply chain management becomes more digital, AI acts as a catalyst for Kaizen, the philosophy of continuous, incremental improvement.

For example, natural language interfaces make GenAI accessible to non-technical employees, allowing them to synthesize unstructured data and turn inscrutable numbers into workflow insights.

While agentic AI will eventually execute tasks like humans, it cannot assume responsibility. Consequently, while we will see fewer roles focused on data assembly, we will see a rise in roles centered on strategy, scenario planning, and decision-making.

Building the future of cloud infrastructure—enabling businesses to seamlessly migrate, replicate, and optimize workloads across multi-cloud environments is the name of the job for Harshit.

Previously the first engineer at Accurics, where he led core development efforts on its policy engine and cloud security platform, Harshit brings expertise in Go, Kubernetes, Terraform, and cloud compliance. He has spent over a decade designing resilient systems across AWS, Azure, and GCP and now has a mission to eliminate cloud lock-in and make infrastructure as portable and resilient as code.

In our quick conversation, Harshit discusses how outages aren’t isolated events — they highlight a bigger structural issue in how enterprises depend on the cloud and are dramatically underestimating their risk exposure.

M.R. Rangaswami: Why are we seeing a rapid rise in large-scale cloud outages, and what do they reveal about systemic fragility in today’s cloud monoculture?

Harshit Omar: What we are seeing right now is not a series of isolated incidents. It is the breakdown of a long-held industry assumption that hyperscalers are effectively too big to fail. They are not. At this scale, even routine configuration issues ripple across millions of workloads instantly. AWS, Azure, and Cloudflare all experiencing issues within weeks is not a coincidence. It is a structural warning.

We called this trend years ago. The hyperscaler model has concentrated compute, storage, networking, AI workloads, and identity into centralized control planes with enormous blast radius. As the global workload footprint grows, the fragility grows with it. Outages are more common because complexity has exploded and enterprises have almost no buffer when the core infrastructure falters.

M.R.: Despite a decade of talk about multi-cloud, why do most enterprises still depend on a single cloud, and how does this amplify the impact of recent outages?

Harshit: Multi-cloud became an idea rather than an architecture. Many leaders said they wanted it, but the practical tooling to make it possible without rebuilding everything did not exist. Most organizations could not justify duplicating pipelines, infrastructure definitions, security policies, or observability frameworks in multiple places. Over time they drifted into single-cloud dependency without fully realizing it.

This is why outages feel so catastrophic. When AWS or Azure goes down, organizations do not simply wait. They are stuck. They cannot move workloads or fail over quickly because their environment truly exists in only one location. It is the digital version of building an entire city that depends on a single bridge.

This is the fragility we have been highlighting for years. The cloud became so successful that many teams forgot a basic rule of cloud-native architectures: resilience only exists when redundancy is created across boundaries rather than within them.

M.R.: What does a more resilient cloud architecture look like, and how can approaches like Cloud Cloning help enterprises respond instantly when a provider goes down?

Harshit: True resilience means your entire environment can run somewhere else without rewriting it. That is the standard enterprises now need. Until recently, that standard was out of reach for most organizations.

Cloud Cloning was created because we knew this moment was coming. Enterprises should not have to refactor workloads, rebuild pipelines, or recreate identity and access systems just to gain optionality. A cloned environment should be able to run in another cloud the moment you need it. Same architecture. Same configuration. Same governance. Just operating on a different provider.

If outages are becoming routine, portability becomes the primary defense strategy. The next generation of cloud architecture will not ask which cloud you are on. It will ask how quickly you can move when the cloud you depend on stops working.

M.R. Rangaswami is the Co-Founder of Sandhill.com

Aryan Poduri is a Bay Area high school senior who likes to build. He’s the author of GOAT Coder, a beginner-friendly programming book for children that has sold over 2,000 copies, thanks to it’s concentration on making coding approachable, practical, and enjoyable.

In his words, Aryan is especially interested in how technology can be used to teach, empower, and bring people together, rather than intimidate them. When he’s not working on projects or thinking about his next idea, Aryan can usually be found playing basketball, watching football, experimenting with design and digital art, or working out.

He sees these hobbies as a creative reset. A way to step away from screens, move his body, and come back to problem-solving with a clearer head.

As we head into the holidays, we hope this conversation with Aryan inspires you to have conversations with the young people in your life about access and opportunities in tech; we can learn so much.

M.R. Rangaswami: In your opinion, what stops kids from getting into coding?

Aryan Poduri: One of the biggest roadblocks is that most kids simply don’t get early exposure. Coding is treated like some mysterious adult-only skill, when really it’s just another form of problem-solving. A lot of schools don’t have strong computer science programs, and even when they do, they usually start way too late. By the time students finally meet coding, they’ve already built this idea in their head that it’s “too hard” or “not for them.” It’s basically like giving someone a bicycle at 17 and saying, “Here, ride this like you were six.”

The other problem is the resources themselves. Most beginner books feel like they were written by robots trying to talk to other robots. Kids look at the first page, see a paragraph about “data types” or “object-oriented paradigms,” and immediately check out. It’s not that they don’t want to learn. It’s that the door is basically locked from the start. If you want kids to walk in, you have to make the entrance a little more inviting than a wall of academic vocabulary.

M.R.: Does giving kids more opportunities to learn coding matter if they’re not already thinking about it?

Aryan: Coding is becoming a basic skill like writing emails or understanding how not to burn toast. When kids learn to code early, they’re not just memorizing random commands; they’re learning how to think. They get better at breaking big problems into smaller ones, staying patient, and trying again when things fail. Those skills spill into everything else they do, whether it’s school, sports, or figuring out how to fix the family Wi-Fi (which instantly makes you the household hero).

On a broader level, expanding access to coding matters because it opens doors that many kids wouldn’t otherwise get. Not everyone grows up surrounded by tech or has a parent who can help them learn. When more kids have access, the tech world gets more voices, more creativity, and more people solving real problems from different angles. It’s basically the difference between a playlist with one song on repeat and an entire Spotify library. You just get way more possibilities.

M.R.: How do you hope GOAT Coder will help the next generation of coders?

Aryan: GOAT Coder exists because I got tired of seeing kids run into the same boring barriers I did when I first started learning. I wanted something fun. Something that felt more like a conversation than a textbook. So I wrote the book I wish younger-me could’ve opened: one with jokes, stories, and straightforward explanations that actually make sense. The whole point is to take away the fear factor and replace it with curiosity. If a kid finishes a chapter and thinks, “Wait, that was actually fun,” then I’ve basically won.

The book is my way of giving kids an easy entry point into a world that usually looks locked away behind complicated symbols and intimidating explanations. Instead of telling them, “Here’s everything you need to know before you start,” it says, “Let’s start, and you’ll figure things out as we go.” It’s a small step, but it helps build confidence, and confidence is everything in coding. If you can get a kid to believe they can do it, they usually will.

M.R. Rangaswami is the Co-Founder of Sandhill.com

Our friends at Software Equity Group have released their Q3 Quarterly SaaS Report.

With SaaS deal activity surging to to record levels in 3Q25, they’re reporting that this last quarter has been the strongest SEG has ever tracked.

Year-to-date volume has surpassed 2,000 deals, putting 2025 on pace to exceed 2,500 total transactions, a new all-time high.

Here, we’ve pulled two noteworthy M&A highlights and 2 Public Market Highlights from the report.

(For the full report, click here.)

SAAS M&A HIGHLIGHTS

1. SaaS M&A hit an all-time high in 3Q25 with 746 transactions, +26% YoY in 2Q25.

The acceleration reflects buyer confidence, pent-up demand, and improved financing. An expanding SaaS universe and private equity firms selling businesses to return capital have boosted deal supply, supporting record activity and a durable baseline for future SaaS dealmaking.

2. Private equity remains the dominant force in SaaS M&A, accounting for 58% of total transactions in 3Q25.

Consistent with long-term trends since 2020. The market remains led by private equity, sustaining deal velocity while strategic buyers continue to pursue acquisitions aligned with businesses showing growth opportunities in AI.

SAAS PUBLIC MARKET HIGHLIGHTS

- The SEG SaaS Index™ continued to improve in 3Q25

The upper quartile alone gained almost +12% over the same period since April. The strong recovery over just five months highlights the resilience of leading SaaS companies, which continue to demonstrate durable revenue growth and expanding profitability despite intermittent macroeconomic shocks.

Notable outperformers this quarter included:

- Oracle (+33%)

- CS Disco (+20%)

- CrowdStrike (+19%)

- Domo(+16%)

2. Profitability leadership in 3Q25 remained concentrated in essential infrastructure and financial categories.

The SEG SaaS Index median EBITDA margin rose from 4.6% to 9.2% YoY, driven by categories such as ERP & Supply Chain (26.5%), Human Capital Management (23.8%), and Financial Applications (11.5%), which led the field with impressive EBITDA margins.

To download the full report, click here:

Clare Christopher is the editor at Sandhill.com

Our friends at Allied Advisers have released their sector update on hires in AI, observing how Big Tech has increasingly turned to mega acqui-hires— high value strategic acquisitions focused on talent and IP rather than entire companies.

This shift enables rapid integration of elite AI teams and technologies, enhancing innovation capabilities without traditional M&A complexities.

This sector update will review:

- The talent scarcity, rising costs and regulatory flexibility, mega acquihires that have become Big Tech’s competitive strategy.

- Rapid Talent Acquisition – it’s approach and impact

- Effects of bypassing regulatory and transactions related delays and shorten time-to-innovation

- The human capital and reshaping the ecosystem.

Top AI professionals are now in “treat mode”—highly valued and aggressively pursued; while the overall tech talent landscape sees selective growth in emerging roles and contraction in traditional segments.

Looking ahead, we see strong tailwinds in these types of AI acquisitions that prioritize talent and IP integration, focusing on disruptive AI startups with top technical teams. Success hinges on effectively merging teams and cultures thereby accelerating innovation. Stakeholders must navigate this evolving landscape carefully to balance consolidation with ecosystem diversity and sustained competitive advantages.

To read Allied Adviser’s full sector update, click here:

Gaurav Bhasin is the Managing Director of Allied Advisers.

Anand Srinivasan is a Managing Partner at Kaizen Analytix, a leading provider of AI, data analytics, and technology services and solutions.

Headquartered in Atlanta, Kaizen is recognized for its speed, flexibility, and ability to rapidly deliver actionable insights that drive sustainable business benefits across the value chain. The company has been spotlighted by Gartner, NPR, Forbes, and Entrepreneur, and named to the Inc. 5000 list as one of America’s fastest-growing private companies.

In this interview, Anand discusses how Kaizen combines business acumen, deep subject matter expertise, and technical know-how to help organizations unlock measurable results, and he shares his perspective on the evolving role of AI and analytics in building competitive advantage.

M.R. Rangaswami: Why is Agentic AI so important for companies right now and what are solutions you’re seeing that are different from traditional generative AI implementations?

Anand: Research from Gartner has named Agentic AI the top tech trend for 2025 and Kaizen is seeing this trend firsthand with our clients. Agentic AI is becoming essential for companies because it addresses many of the shortcomings of traditional AI deployments that simply produce outputs without context or actionability, to now providing recommendations on how to act upon the data, driving decisions and actions in real time – and doing it all autonomously and at scale.

We’ve entered a new era where AI agents don’t just analyze data–they act on it. At Kaizen, we take a value-first approach to AI. We don’t chase hype, but we recognize that hype often precedes real value creation. Our agentic AI solutions intentionally combine predictive analytics, agentic frameworks, and generative AI, leveraging each for its strongest strengths, rather than relying on any one technology in isolation.

Predictive analytics provides the traceability and visibility enterprises depend on, while agentic and generative AI unlock new levels of automation and action. By curating solutions that balance these strengths and weaknesses, we deliver systems that are both explainable and adaptive.

M.R.: As enterprises adopt agentic AI at scale, what are the biggest challenges companies face in integrating these intelligent agents into existing workflows?

Anand: Enterprises typically encounter challenges on three fronts: technology, process, and people. On the technology side, legacy systems often struggle to support the API-driven integrations that agentic AI agents require. From a process perspective, some workflows are well-suited for automation, while others need to be redesigned or adapted before AI can add real value.

On the people side, organizations face a shortage of skilled AI engineers and change-management expertise needed to sustain adoption at scale. It’s no surprise that research from IDC indicates that 88 percent of AI pilots fail to reach production, highlighting the difficulty of scaling AI initiatives beyond initial experiments.

Equally important when considering Agentic AI solutions is making sure there is a human element. We find that successful AI deployments include a subject-matter expert who serves as a “human in the loop.” This role provides critical oversight to ensure responsible guardrails, contextual understanding, and alignment with business objectives. The result is agentic AI that is pragmatic, trusted, and designed to drive measurable outcomes–whether in pricing, anomaly detection, or extracting value from unstructured data.

M.R.: How is agentic AI reshaping enterprise decision-making beyond traditional automation?

Anand: Agentic AI is helping enterprises rediscover their “ikigai”—a renewed sense of purpose in how decisions are made and value is created. Post-COVID workforce shifts led to a loss of tribal knowledge about how things actually work inside organizations. Agentic AI fills that gap by capturing institutional wisdom and enabling employees to focus on elevated outcomes and meaningful insights, not just ‘doing the work.’

The result is decision-making that is faster, more adaptive, and more customer-focused. Instead of relying on 5 or 10 times the number of software developers to hard-code complex processes, agentic AI finds easier, more efficient ways to add value to the end customer and scales without legacy constraints. It’s not just automation—it’s a way for companies to scale intelligence and impact across the enterprise.

Agentic AI represents a turning point in how enterprises approach intelligence, automation, and decision-making. Unlike earlier waves of AI that focused mainly on efficiency gains, this new generation of AI agents is reshaping how organizations respond to disruption, scale knowledge, and create customer value. The companies that succeed will be those that balance automation with human judgment, build adaptability into their workflows, and embrace AI not as a standalone tool but as an embedded capability across the enterprise. In many ways, agentic AI is less about replacing work and more about redefining it—elevating the role of people while enabling organizations to compete at an entirely new level.

Over the next five years, agentic AI will move from early adoption to enterprise necessity. Organizations that embrace it will not only streamline operations but also unlock new levels of resilience, adaptability, and customer value. Those that delay risk being left behind as decision-making itself becomes a core source of competitive advantage.

M.R. Rangaswami is the Co-Founder of Sandhill.com

Our friends at Software Equity Group have released their Q2 Quarterly SaaS Report.

As they observed, even with the mixed economic signals, strong buyer appetite persists.

Austin Hammmer, Principle at SEG offers, “SaaS M&A has normalized at a structurally higher level, with Q2 marking another record quarter for volume.” The report reveals, “The market is strong, valuations remain compressed but are stabilizing meaningfully, with momentum building as buyers grow increasingly confident and prepare for a more favorable macro environment.”

Here, Sandhill summarizes two highlights from the M&A portion of the report, and two highlights from their SEG Q2 public market update.

2 SAAS M&A HIGHLIGHTS

- Aggregate software industry M&A volume remained strong in 2Q25, continuing the trend established in Q1. The broader market recorded 1,126 software M&A transactions in the quarter, matching 1Q25’s high watermark and reinforcing the post-COVID baseline of ~900+ deals per quarter.

This sustained activity reflects growing clarity around monetary policy, easing investor concerns tied to geopolitical volatility, and renewed urgency among buyers to capitalize on scalable, efficient platforms.

2. SaaS M&A continued its record-setting pace in Q2 with 637 transactions, the highest quarterly total SEG has tracked. That brought 1H25 volume to 1,273 deals, up 30% from 1H24. With four consecutive quarters of 530+ deals, SaaS has firmly re-enforced itself as a consistent and resilient engine of software M&A.

Recurring revenue, strong retention, and vertical specialization remain key attractors as platform strategies and sponsor activity fuel continued growth.

SAAS PUBLIC MARKET HIGHLIGHTS

- The SEG SaaS Index™ rose meaningfully from Q1 to Q2, narrowing its YTD loss to 9.1% after being down over 20% in March. Performance remained bifurcated, with top quartile companies rising 7.5% YTD, while the bottom quartile fell over 21%, highlighting growin valuation dispersion.

Investors rewarded consistent, efficient operators while penalizing those with unprofitable or inconsistent models. While broader equity markets posted modest gains on stable rates and easing inflation, public SaaS remained more sensitive to execution quality, with macro relief benefiting only the strongest names.

2. Valuations compressed, with the median EV/TTM revenue multiple falling to 5.1x, down from 5.9x in 1Q25 and 5.8x in 2Q24. However, dispersion remained wide: top quartile companies traded at 9.0x, compared to just 2.8x in the bottom quartile. Companies with >40% Weighted Rule of 40 scored 12.4x, nearly 2.5x the Index median, reinforcing that balanced performance still commands a premium.

Similarly, companies with 120% net retention traded at 11.1x, a 117% premium to the Index median, underscoring how deeply investors value durable, expanding customer relationships.

To download the full report, click here:

Clare Christopher is the editor at Sandhill.com

Few leaders in enterprise technology have had as broad and lasting an impact on how software is delivered and consumed as Dr. Arthur S. Hitomi. As President, CEO, and co-founder of Numecent, Dr. Hitomi has spent his career reimagining software deployment, from his early work shaping Internet standards like HTTP 1.1 and REST, to inventing the patented Cloudpaging technology that now powers seamless, on-demand application delivery across modern enterprise environments.

With 38 patents issued and many more pending, Dr. Hitomi is a recognized pioneer in on-demand application delivery, application virtualization, streaming, and desktop transformation. At Numecent, he has led both technology development and business strategy, guiding the creation of Cloudpaging and Cloudpager, which allow enterprises to deliver even their most complex Windows applications to any desktop, virtual or physical, without reengineering.

In this interview, Dr. Hitomi shares his perspective on the real-world pain points Numecent solves, how their platform is helping enterprises modernize faster and more cost-effectively, and how innovations like Cloudpaging AI are scaling transformation across the software ecosystem.

M.R.: What real pain does Numecent’s Cloudpaging technology solve for enterprises managing legacy Windows applications?

Dr. Hitomi: One of the most persistent and costly challenges facing enterprise IT today is maintaining business-critical legacy and custom Windows applications through platform upgrades and updates, particularly as Microsoft’s Windows 10 end-of-support deadline looms. Applications written years or even decades ago often contain hardcoded dependencies, outdated middleware, or components that conflict with modern environments. This makes them incompatible with Windows 11 or virtual desktop infrastructures like Azure Virtual Desktop (AVD) or Windows 365, and incredibly costly to maintain in an ever-changing environment.

Numecent’s Cloudpaging technology addresses this problem at the root. Rather than repackaging, recoding, or reengineering applications (which can take weeks per app) Cloudpaging dynamically containerizes applications so they can run on modern platforms without modification. Its patented technology breaks applications into “pages” and streams only what’s needed to launch, with the rest delivered on demand. Applications behave as though they’re locally installed, yet remain isolated, with full support for drivers, services, middleware, and even conflicting Java versions.

The result? Enterprises can migrate their desktop environments without leaving legacy apps behind or undergoing massive reengineering efforts. Cloudpaging enables seamless compatibility, accelerates digital transformation, and saves millions in development and operational costs.

MR: How does Numecent’s Cloudpaging and Cloudpager platform deliver long-term cost and operational advantages?

Dr. Hitomi: Traditional application deployment methods are slow, resource-intensive, and difficult to manage at scale, especially in environments where users are distributed across hybrid workforces and multiple device types. IT teams spend an inordinate amount of time troubleshooting installations, repackaging apps for different environments, and managing updates and rollbacks across multiple platforms.

Cloudpaging changes the equation by virtualizing applications at the page or binary level. It enables them to launch within seconds, run at native speed, and operate without the need for system reboots or changes to the host OS. Applications are streamed just-in-time, meaning organizations drastically reduce bandwidth usage and storage overhead. More importantly, Cloudpaging maintains application integrity, so each app runs exactly as intended, no matter the underlying system.

Layered on top is Cloudpager, Numecent’s cloud-native application container orchestration platform. Cloudpager provides a single pane of glass to manage application delivery, versioning, entitlements, and compliance policies across physical desktops, virtual desktop infrastructure (VDI), DaaS, and cloud workspaces. With real-time visibility and control, IT teams can dynamically update or roll back applications, enforce usage restrictions, and reduce downtime, all without disrupting end users.

The combination of Cloudpaging and Cloudpager empowers enterprises to adopt modern desktop strategies while reducing total cost of ownership, improving end-user experience, and creating a more agile, DevOps-style approach to desktop application management.

MR: What growth or opportunity does Numecent see with the launch of Cloudpaging AI and its broader ecosystem impact?

Dr. Hitomi: The recent introduction of Cloudpaging AI represents a major leap forward in accelerating application modernization at enterprise scale. Historically, even with powerful tools like Cloudpaging, creating containers for complex legacy apps still required skilled engineers to carefully sequence application components and dependencies. With Cloudpaging AI, Numecent leverages machine learning to automatically analyze and package applications in minutes, drastically reducing the effort and time required.

This innovation unlocks a massive opportunity: enterprises can now containerize and migrate hundreds, even thousands, of legacy and homegrown applications quickly and cost-effectively. It also means partners, system integrators, and managed service providers can scale their services faster and offer end-to-end desktop transformation solutions without the typical bottlenecks.

As organizations move toward hybrid cloud and modern digital workspaces, Numecent’s technology suite – including Cloudpaging, Cloudpager, and AI Packager – makes it possible to maintain operational continuity while accelerating transformation. Enterprises can retire legacy infrastructure, embrace AVD or Windows 365, and still run mission-critical apps without compromise. It’s a powerful enabler for digital resilience, security, and long-term IT agility.

M.R. Rangaswami is the Co-Founder of Sandhill.com

By Mike Gentile, CEO of CISOSHARE

No matter what industry you work in, you know generative artificial intelligence (AI) is here, rewriting the rules of business in plain sight.

Marketing teams churn out campaigns in minutes. Developers push code in the time it takes to sip a coffee. The pace is breathtaking, but amid the rush, one question is splashed in red on the walls:

Who is making sure this power is used safely, ethically, and securely? For too many organizations, the answer is no one, and that’s a problem.

According to Gartner, more than half of enterprises are piloting or using generative AI. However, fewer than 10% have established governance frameworks to manage the risks.

This gap indicates a fault line running beneath the future of business. Without proper oversight, efficiency gains come at the expense of security and accountability.

Just recently, IBM’s Cost of a Data Breach Report found that one in five organizations reported a breach due to security incidents involving “shadow AI,” or AI tools that employees use without organizational knowledge or approval. These breaches were more likely to result in the compromise of personally identifiable information and intellectual property, making them extremely costly.

In other words,organizational leaders and the Chief Information Security Officers (CISOs) they generally task to address this can’t afford to sit back. These are the people who must step forward and take the lead in governing AI before that fault line cracks wide open.

Let’s talk about it.

Why CISOs Must Step Forward

Data leaks. Biased algorithms making decisions no one can explain. Shadow AI tools creeping into workflows without approval. Third-party integrations that open doors no one is watching.

These are all landmines sitting under every organization rushing to adopt AI without a plan.

The truth is, AI is moving too fast for half-measures. You can’t kick this to a committee, and you can’t hand it off to IT and hope for the best. The stakes are too high, and the risks too immediate.

This is exactly why CISOs have to step into the arena. They’re the ones who know where systems break, where blind spots form, and how attackers think.

CISOs see the fault lines before anyone else. They’re responsible for protecting trust, livelihoods, and entire enterprises. In this moment, that means governing AI in new and proactive ways.

The Risks Few Want to Talk About

Generative AI has dazzled leaders with its potential, but beneath the hype lies hard truth. Some the top risks include:

Shadow AI

Unauthorized AI tools slip into the workplace, operating outside the guardrails of security. This problem starts small—an employee downloads a flashy app to make their job easier. There’s no approval, no oversight. Suddenly, sensitive data is flowing into unvetted systems, compliance gaps open, and security leaders are left blind.

Regulatory Exposure

Lawmakers aren’t asleep. Across the globe, governments are drafting rules that will demand accountability.

Eventually (if not currently), companies that can’t demonstrate control over their AI use will face fines as well as public hits to their reputation.

Long-Term Operational Risk

AI models adapt, drift, and change in ways no one can fully predict. Without governance, today’s productivity tool can become tomorrow’s breach vector, eroding stability from the inside out.

In general, the risk is as existential as it is technical. If AI undercuts trust, both customers and employees will lose faith in the organization itself.

Questions Every CISO Should Be Asking

Before greenlighting any AI initiative, security leaders must insist on clarity. At minimum, every CISO should be asking:

- Who owns accountability for AI outputs and errors?

- How is sensitive data protected from misuse or leakage?

- What compliance obligations apply today, and which may apply tomorrow?

- How will AI decisions be audited, monitored, and explained?

- Where does human oversight begin and end?

When a CISO raises these questions, it won’t always draw nods of agreement. Sometimes it slows the conversation, and sometimes it shifts the mood around new technology and innovations.

However, that’s the weight of real leadership. Today’s CISOs need the courage to steady the AI course when everyone else is eager to sprint ahead.

A Call to Leadership

AI is rewriting the rules of business, but governance will determine whether those rules lead to resilience or collapse.

This starts with executive management at organizations realizing this is something that is important. From there, assessing if they have the internal security team to address it or hiring it if they don’t.

CISOs have spent decades building programs to balance speed, progress, and security. Now is the moment to apply that discipline to artificial intelligence tools.

To remain silent, to allow adoption without oversight, is to abandon the very role CISOs were created for. The path forward is clear: security must lead, or organizations will stumble into risks they cannot contain.

The story of AI won’t be defined by those who rush headlong without caution. It will be shaped by leaders who bring vision, discipline, and resolve, and I believe that mantle belongs to the CISO.

About the Author:

Mike Gentile, CEO of CISOSHARE, has spent his career guiding organizations through the challenge of building and scaling security programs. Known for his practical approach and deep expertise, he works with enterprises worldwide to turn security strategies into living, operational systems.

Today, his focus includes helping leaders confront new frontiers in risk management, including the fast-moving arena of AI governance.

Having previously served as Kurrent’s Technical Support Manager where he specialized in event-driven architectures and CQRS implementations, Lokhesh brings expertise hands-on experience to build complex event sourcing systems with cutting-edge AI integration.

This conversation is for those who love insights on AI-driven database solutions and learning about what organizations can do to ready themselves for the changes that are coming in the database automations.

M.R. Rangaswami: How is Kurrent’s MCP Server reimagining database interactions for both technical and non-technical users?

Lokhesh Ujhoodha: There’s a broader shift in how people interact with complex systems using MCP Servers. Traditionally, working with a database has meant writing structured queries, managing schemas and understanding low-level details like indexing or projection logic. That’s fine if you’re a backend engineer or database administrator, but it creates steep barriers for others who often have questions they can’t easily get answered without writing code.

With the introduction of MCP and emerging open-source servers, the way users interact with databases is starting to shift. Now users can interact with their data through natural language either directly with an AI assistant or through integrated tools. This means you can ask for a stream of events, write a new projection or debug logic through a conversational interface.

For technical users, this accelerates development cycles by enabling faster prototyping, allowing developers to test projection logic and debug issues through conversational commands. For less technical users, it lowers the barrier to engaging with data in meaningful ways. In both cases, it’s reimagining the interface layer between humans and systems by abstracting it into intelligent workflows that are easier to reason about and iterate on.

M.R.: Why is democratizing access to real-time data architectures so important as we look ahead to a future where agentic AI workflows become mainstream?

Lokhesh: The next generation of AI systems requires access to data that’s timely, contextual and complete. Unlike traditional AI that relies on static training sets or batched data, agentic workflows need to be fed with live signals and historical context in real time. Without that, their actions risk being either irrelevant or outright wrong.