The Internet of Things (IoT) is an important catalyst and perhaps the killer app for the deluge of big and fast data. From smart consumer products like Nest and Fitbit to industrial controls that monitor factories and buildings to the “connected car,” a rapidly growing population of IoT endpoints is generating massive and swiftly moving streams of information. To fully exploit the IOT opportunity, organizations must ingest and analyze these new and diverse feeds in real time and move from what has been a handcrafted approach to one that is automated and trusted. It’s a task that is much easier said than done, requiring not just new tooling but new thinking.

The pressures that IoT data apply to enterprise information systems will force organizations to upgrade their dataflow infrastructure and processes with an eye towards establishing disciplined continuous data flow operations. This means employing new technologies focused on data in motion to augment the myriad tools used to manage data at rest. As important, they will have to adopt an operational mindset when it comes to monitoring data flows (e.g., availability and accuracy metrics), much like they do for application and network performance.

There are three reasons the IoT forces a switch to continuous data operations.

1. Data distrust caused by data drift

This may come as a surprise, but unlike highly curated enterprise data sources, you can’t trust big data a priori. That’s because IoT and other big data feeds are often emitted by systems in different governance zones, which makes them subject to data drift — unexpected and unpredictable changes to data structure and semantics that can break downstream processing of the data.

For instance, a sensor manufacturer performs a rolling firmware upgrade that adds or drops fields, or changes the structure or meaning of fields. Such changes cause the data to become inconsistent across the device population, eroding the data’s trustworthiness and jeopardizing the integrity of downstream analyses based on it as well as any actions or decisions based on those analyses. In short, data drift means you can’t just “fire and forget” your dataflow logic; you must always be on the lookout for change.

2. From siloed pipelines to dataflow topologies

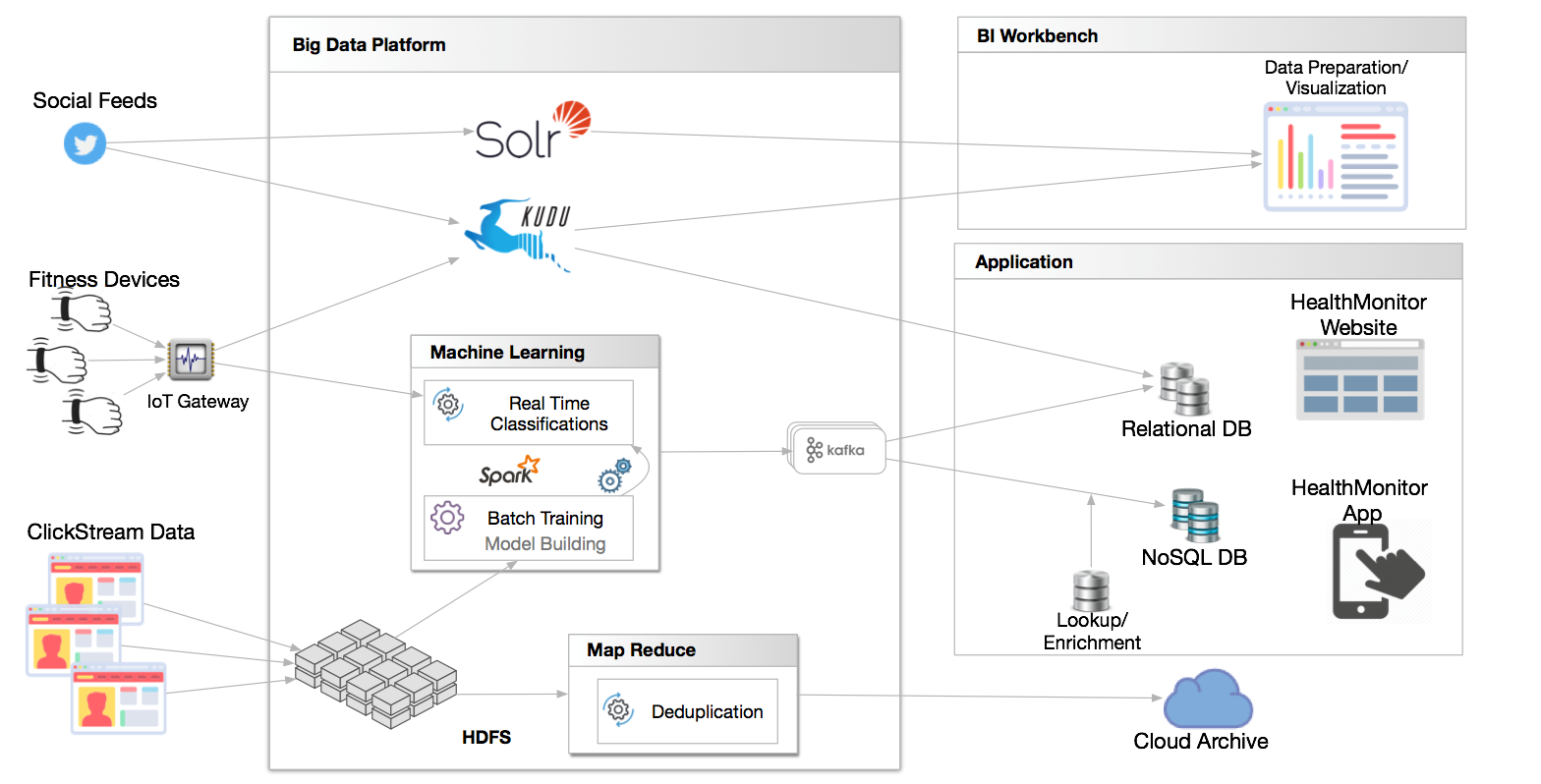

In the same way that data structures can change, so can dataflow topologies, the web of linkages between data sources, compute/storage infrastructure and analytics systems. In traditional data integration, a pipeline to move transaction data from a few applications to a data warehouse was a simpler orchestrated process. In contrast, IoT spawns vastly more complicated dataflows such as the one shown below.

An example of the complexity of IoT and other big data dataflow topologies

IoT data needs to be correlated with other data streams, tied to historical or master data or run through a machine-learning algorithm. It may be shipped to multiple analytics platforms or to storage facilities located on premises or in a private or public cloud. It is consumed in analytics applications, used by data scientists and even may trigger automated operational processes such as personalizing a web or mobile app experience.

Even subtle changes to these dataflows can create ripple effects that cause serious problems that cascade across multiple systems and are difficult to diagnose.

3. Time-sensitive analysis and use

Whereas revenge is a dish best served cold, IoT data is best served hot and fresh. It may trigger actions or decisions that are only valid for the time frame of a specific event such as arriving at a specific location or surpassing a certain temperature or heart-rate threshold. In these cases, time is not your friend. Since the data must be transformed, filtered and analyzed as it arrives, there may not be time to store it first. In fact, given the high volumes and transient nature of the data, you might choose not to store it at all.

These three factors — data drift, complex topologies and real-time analysis — will lead organizations to start managing dataflows in the same way they have taken to managing modern applications, networks and IT security, as a living breathing operation that must run reliably and automatically on a continuous basis. In some ways, data is the final frontier in the quest for continuous IT operations.

In fact, we believe that in response to the pressure for continuous operations, companies will create an organizational unit — we call it the Data Operations Center (DOC) — to ensure availability and accuracy of data in motion. In the same way companies use APM, NPM and SIEM tools, they will acquire dataflow performance management (DPM) tools to empower the DOC to do its job.

In summary, IoT initiatives offer new opportunities for companies to better serve their customers, become more efficient and reduce business risks. But data drift from IoT devices also forces companies to mature their data operations to ensure they maximize the value of the data. To address this, in 2017, enterprises must build continuous data operations capabilities that are in tune with the high-volume, time-sensitive and dynamic nature of today’s data.

Rick Bilodeau is the VP of marketing at StreamSets, where he leads corporate marketing, product marketing, demand generation, digital marketing and field marketing functions.