For the past few years, we have heard a lot about the benefits of augmenting the enterprise data warehouse (EDW) with Hadoop. The data warehouse vendors as well as the Hadoop vendors have been very careful with their terminology and showcasing how Hadoop can handle unstructured data while the EDW will continue to remain as the central source of truth in an enterprise.

That message was a desperate attempt by Teradata to hold on to its worldview while partnering with Hadoop vendors to ensure they stay on message as well.

Well, the game is over. With the rapid evolution of robust enterprise features like security, data management, governance and enhanced SQL capabilities, Hadoop is all set to be the single source of truth in the enterprise.

Data warehouse augmentation with Hadoop

One of the first use cases for Hadoop in the enterprise was off-loading ETL tasks from the enterprise data warehouse. Since Hadoop is excellent at batch processing, running ETL on Hadoop was an obvious choice. This provided the big benefit of saving precious resources on the EDW, leaving it to handle the more interactive and complex analytical queries. However, the term “off-load” or “migrate” ETL to Hadoop had negative connotations for data warehouse vendors that were concerned that this meant that Hadoop could do things that were traditionally done in the EDW at a much lower cost.

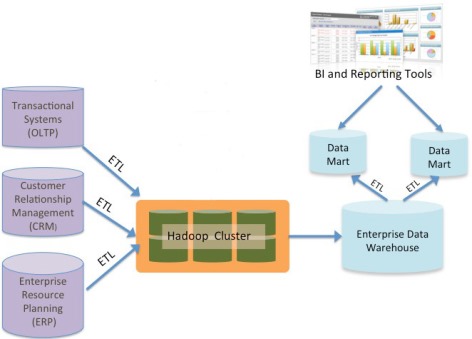

Thus was born the term “augmentation.” Hadoop was not off-loading the EDW; it was augmenting it. The typical DW augmentation architecture thus shows Hadoop running ETL jobs and sending the results to the EDW.

The advantage of this architecture is that the EDW is still the center of the data universe in an enterprise. Downstream data marts, business intelligence (BI) tools and applications continue to work in the exact same manner, thus requiring no new tools or training for the business analysts. For first-time Hadoop users, this architecture makes sense — start small with a new technology; don’t chew more than you can bite.

Other DW use cases

Of course, once Hadoop entered the enterprise, there was no stopping it. Within data warehousing itself, it is now used in myriads of applications, including:

- Off-loading computations: Run ETL on Hadoop

- Off-loading storage: Move cold data (not often used) from EDW to Hadoop

- Backup and Recovery: Replace tape backup with an active Hadoop cluster

- Disaster Recovery: Use Hadoop in the DR site to back up the EDW

Notice that none of the above touch unstructured data — we are only talking about traditional EDW and how Hadoop can make a difference in both reducing cost and increasing performance while providing improved access to the data resources. Some of our customers have realized significant cost savings using Hadoop to augment the EDW.

Hadoop for analytics

The big promise of Hadoop is the potential to gain new business insights with advanced predictive analytics enabled by the processing of new sources of unstructured data (social media, multimedia, etc.) in combination with data from the EDW. However, using it for online, real-time analytics has been a problem until now. Hadoop’s architecture and MapReduce framework make it slow for real-time processing; its design point was batch.

This is the reason for the continued center stage of the EDW. Databases like Teradata are excellent at performing complex, analytical queries on large amounts of data at great speeds.

SQL on Hadoop

Although the Hadoop community recognized the need for SQL early on, it is only in the last year or two that great strides have been made to create an enterprise-grade SQL solution that can meet the needs of data warehousing analytics.

The Hortonworks Stinger initiative has dramatically improved performance for interactive queries in Hive, the dominant SQL-on-Hadoop technology.

Cloudera developed Impala from scratch, touting MPP database like performance.

Meanwhile, Apache Spark has gained momentum, replacing MapReduce to focus on real-time, streaming analytics. Spark SQL holds much promise and, along with the likes of Hive and Impala, developers and users will have multiple technologies to choose from.

As adoption increases and the products mature, Hadoop will be more powerful than the MPP databases of today. We will see a shift from “augmentation” to “replacement” of the EDW with Hadoop.

The new enterprise data architecture

With all the pieces for real-time, streaming analytics and enterprise features such as security (all Hadoop vendors now have security built in), data management and governance with the likes of Apache Falcon, Hadoop is ready to become the single source of truth in the enterprise. No longer does it need to play second fiddle to the data warehouse; it IS the data warehouse. In fact, in many Internet and technology companies, the data warehouse is built solely on Hadoop. Let us examine what this architecture might look like.

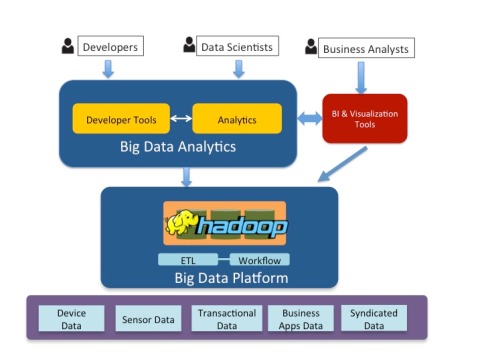

In the new enterprise architecture, Hadoop takes center stage. Businesses comfortable with their existing BI and reporting products can continue to use them as the products adapt to access the Big Data platform. Enterprise developers can build custom tools and applications while data scientists use Big Data exploratory and predictive analytics tools to obtain new business insights. This is, after all, the true promise of Big Data: combine multiple sources of information and create predictive and prescriptive analytical models.

In the new enterprise architecture, Hadoop takes center stage. Businesses comfortable with their existing BI and reporting products can continue to use them as the products adapt to access the Big Data platform. Enterprise developers can build custom tools and applications while data scientists use Big Data exploratory and predictive analytics tools to obtain new business insights. This is, after all, the true promise of Big Data: combine multiple sources of information and create predictive and prescriptive analytical models.

This transition from Hadoop augmenting the data warehouse to replacing it as the source of truth in larger enterprises can be undertaken in a phased approach with new analytical applications being served from Hadoop while the EDW still feeds the legacy BI applications.

Data warehousing vendors recognize this and are coming up with creative ways to stay relevant. Both Teradata and more recently Oracle have technologies that allow queries to span across Hadoop and the database, allowing Hadoop to process data stored in it while the database continues to handle the structured data. This is another good intermediate step in the transition process (albeit making one more dependent on the EDW, not less!).

Conclusion

It is a matter of time before the enterprise data warehouse as we know it, with expensive proprietary appliances and software technologies, becomes obsolete. The open source Hadoop ecosystem is thriving and evolving very rapidly to perform all of the storage, compute and analytics required while providing dramatic new functionality to handle huge amounts of unstructured and semi-structured data. All of this functionality comes at a fraction of the cost. This ecosystem has proven that it is no longer true that innovation happens only in closed source, proprietary companies.

Hadoop as the central source of truth in the enterprise is almost here. If your enterprise has yet to begin the Hadoop journey, step on the pedal — otherwise, you may be left behind.

Shanti Subramanyam is founder and CEO at Orzota, Inc. a Big Data services company whose mission is to make Big Data easy for consumption. Shanti is a Silicon Valley veteran and a performance and benchmarking expert. She is a technical leader with many years of experience in distributed systems and their performance acquired at companies including Sun Microsystems and Yahoo! Contact Shanti via twitter @shantiS, on LinkedIn, or http://orzota.com/contact.